Fifteen films directed by Jean-Luc Godard are represented in the middle of the screen. Each film is on one line, and time is represented by the x axis.

The films have been automatically segmented into cuts by a simple algorithm I wrote that looks for discontinuities between frames. Moving the mouse over a cut launches an image preview above. Clicking with the right mouse button changes the preview, and with the left it begins to play.

The movie plays through it's image representation, which can be used to seek to arbitrary points within the clip; successive clips can be selected while the first is playing.

This interface allows playing the film out of order. New orderings can be generated computationally as well. Pressing the space bar causes clips to be scattered across the screen based on their color. Hue is mapped to the x axis, saturation to y.

When a clip is highlighted in the scatterplot, its location is highlighted in the timeline view above.

As it is written with HTML5/Javascript for the web, you may try it out if you wish, though bear in mind it is but a prototype and is quite experimental at present.

The topic of my research at the Jan van Eyck is "compression."

Compression -- as pertains to the moving image -- is generally understood on a technical level. Movies are "compressed" into smaller file sizes to allow for their distribution through digital mediums, most recently the Internet. Compression, then, is the basis and enabling force behind digital networked communication. When it is successful, compression is invisible, imperceptible to the human eye; a bias of the flow and intensity of imagery towards recognition by the human visual system. Compression inherently involves a determination of what information is most important, and a purge of the rest. For example, compressed images lose disproportionately more detail in their dark regions, where human eyes are less sensitive.

Considered as a filtering protocol, the idea of compression can be applied more broadly to the production of moving images: editing a film entails a "compression" of many hours of footage into a comparatively short piece. In many cases, this compression too is deemed successful when it is imperceptible. The final result of editing should appear "complete" even though it is obviously missing most of what was filmed (not to mention what was never even recorded in the first place). And how few people there are who determine the parameters of compression -- the relative importance of information -- for so many! Compression of information into video is highly centralized.

Directly between the social and technical compression of the moving picture is the editing interface: it is designed to facilitate the social process of compression and then perform an additional level of algorithmic compression to finally yield a form suitable for dissemination. And unsurprisingly its structure mirrors and re-enforces the social structure in which it operates: it is designed for use by a single operator to determine a canonical subset of closely-guarded, privately-held, source video for "read-only" distribution to a passive audience.

It is at this site of production, the video editing interface, where I

have started a multifaceted investigation into "compression," in

particular as concerns the organization and distribution of media.

No technique of compression is universally effective.

Compression requires making assumptions, limiting the space of possibility.

Noise is un-compressible.

By its very definition, the only pattern you can find in noise is its

exact inverse.

This is a game where you align noise with its mirror image.

The most secure form of cryptography -- used for nuclear bomb authorizations and the such -- relies on noise. Conspiring parties (and they alone) share a code book, which contains random noise. The sender adds noise from the code book to the message, and the recipient then subtracts the same noise, decoding the message. Without the code book, it is mathematically impossible to decypher the message.

The result of total compression is noise: a message is maximally compressed when it is no longer compressible, and when it is no longer compressible it lacks patterns and is thereby noise. Only with knowing the assumptions of the compression (the code book) can the original be recovered.

In this way, encryption and compression are highly related representations (also called encodings).

We can move beyond Noise to Signal.

Any encoded material requires decoding.

In this sister game to Noise--still under some development, and coming out of a year-long conversation with a collaborator in Cambridge, MA--a transmission appears garbled because it is not being decoded properly. The game is to find the decoding parameters (width, height, and phase).

src (with numm)

Representation is the translation of external reality into the

symbolic domain. Also recursively, a translation between one set of

symbols and another.

An image is often represented as a matrix of sampled intensity values, but that's not the only method of representation.

By representing an image as a summation of periodic functions, we can compress the image by reducing frequencies that are barely used.

Single modifications in the frequency domain effect the entire image.

We can also adjust the imaginary (phase) component in the frequency bands.

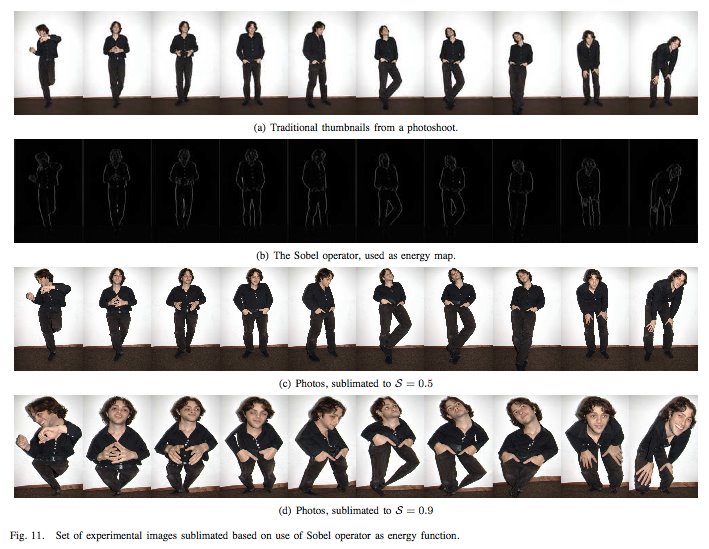

Edges may be a better guide to `importance' for a human than periodicity.

Compression is usually accompanied by its reverse, but my research explores not reversing the compression. Using distortion, loss, to communicate more concisely.

Sublimation interface (with masha)

Compression creates proxy objects, which may be used in place of the

original.

Here we compress time into space.

60 seconds of video at a time are rendered into a still image.

Moving the mouse along the x axis controls the width of each

thumbnail.

The scroll wheel controls which section of the image to select.

At the far edge we get a slit scan which shows one column of pixels per frame.